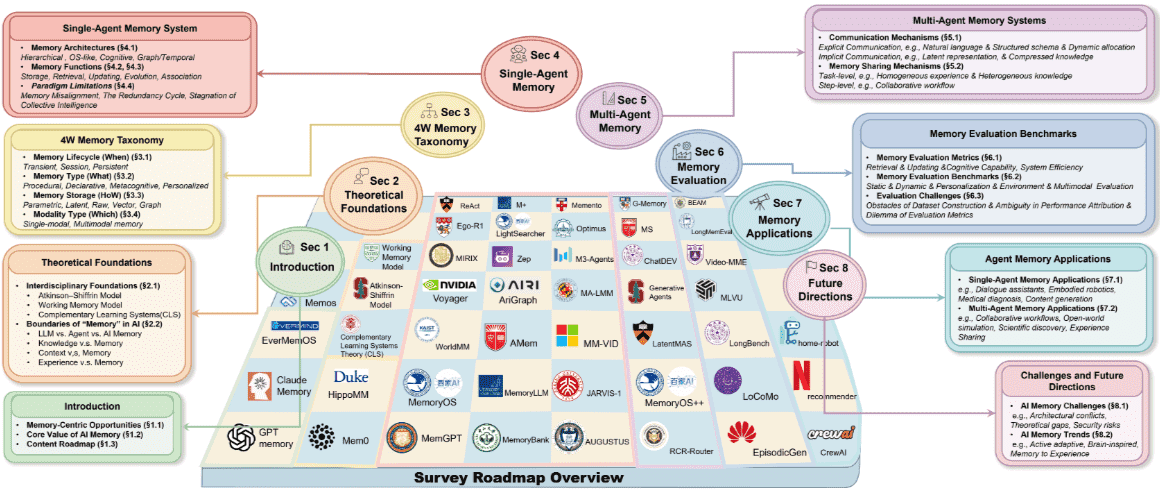

Survey on AI Memory: Theories, Taxonomies, Evaluations, and Emerging Trends¶

总结¶

From Moonlight¶

三句摘要¶

🎯 这篇综述旨在解决当前AI记忆研究的碎片化问题,为LLM驱动的智能体提供一个统一的理论框架和全面的概述。

📖 作者提出了一个结构化的“4W记忆分类法”,详细分析了AI记忆的生命周期、信息类型、存储方式和信息模态,从而系统地整理了现有研究。

🤖 该工作还系统回顾了单智能体和多智能体系统中的记忆架构、功能和评估方法,并讨论了新兴趋势与挑战,为未来AI记忆的发展提供了路线图。

关键词¶

4W Memory Taxonomy: 是一种系统性分类人工智能记忆机制的结构化框架,由“When-What-HoW-Which”四个维度构成。

Cognitive Psychology: 是理解人类思维过程和记忆机制的学科,为人工智能记忆系统的设计提供了理论灵感。

Atkinson–Shiffrin Tri-Store Model: 是认知心理学中的经典模型,将人类记忆概念化为感觉记忆、短时记忆和长时记忆三个相互作用的存储单元。

Complementary Learning Systems Theory: 是认知神经科学中的理论,认为大脑的记忆架构是海马体和新皮质之间协同作用的产物,海马体负责快速编码和索引,新皮质负责渐进式更新和长期存储。

Index and Content Separation Pattern: 是一种AI记忆设计模式,通过维护紧凑的片段键与检索器相结合,指向长期存储中详细丰富的内容,有效克服了上下文窗口固有的容量限制。

Multiphase Consolidation Pattern: 是一种AI记忆设计模式,在关键时刻将近期片段痕迹转化为摘要、反思和可重用技能,从而将长期记忆组织成结构化和通用化的形式。

Structured Coordination Pattern: 是一种AI记忆设计模式,将活动工作空间组织成由中央控制器监督的专用缓冲区,实现口头、视觉和工具相关输出的并行、无干扰维护,并支持决策时的动态集成。

Agent Memory: 指的是一种功能性工作流,它通过感知-规划-行动循环协调以支持自治行为和复杂任务的执行。

LLM Memory: 主要指大型语言模型的计算核心,存在于预训练模型权重中的参数记忆和通过上下文窗口管理的运行时记忆两种特定状态。

Memory vs. Knowledge: 指的是记忆作为随交互动态演化的存储,与知识作为为稳定性而固化并可重复使用的静态沉淀物之间的区别。

Memory vs. Context: 区分了记忆作为应用程序级状态管理器,存在于模型短暂的执行周期之外以封装更广泛的用户交互和代理历史,与上下文作为大型语言模型中的即时执行环境。

Memory vs. Experience: 指的是记忆作为特定交互的基础记录,保留原始数据点,与经验作为更高阶的认知构建,通过处理原始痕迹合成抽象的、可转移的模式。

Memory Lifecycle: 这一维度考察AI智能体系统中记忆的时间跨度,即记忆存在多久以及在何种范围内保持可访问性。

Transient Memory: 指的是在即时输入处理期间短暂存在的记忆,作为进一步处理或存储之前感官输入的临时缓冲区。

Session Memory: 指的是在单个任务执行或会话交互过程中持续存在的记忆,但在此会话结束后不再保留。

Persistent Memory: 指的是能够在单个会话之外持续存在的记忆,并可在多次交互、任务甚至不同智能体实例之间访问。

Memory Type: 这一维度考察记忆捕获的知识的性质,包括程序性技能、陈述性事实、元认知反思和个性化模型。

Procedural Memory: 封装可执行知识,包括技能、行动工作流和工具利用,其主要目标是促进面向目标的行动。

Declarative Memory: 存储事实知识和感知观察,包括环境观察、事实知识库、事件日志和上下文描述。

Metacognitive Memory: 指的是关于智能体自身思维和能力的知识,使其能够跟踪自身表现、识别优缺点、反思过去的行为并调整策略。

Personalized Memory: 存储关于其他智能体和用户的信息,例如他们的偏好、行为和关系,从而使AI智能体能够记住和建模个体。

Memory Storage: 这一维度考察记忆在AI智能体系统中是如何被表示和存储的,决定了记忆的物理形式、访问模式和计算特性。

Implicit Storage: 将记忆存储在模型架构内部,无论是其训练权重(参数记忆)还是处理过程中的隐藏状态(潜在记忆)。

Parametric Memory: 指的是通过预训练或微调等训练过程直接嵌入模型参数和权重中的记忆。

Latent Memory: 指的是不明确存储在模型参数中,而是通过模型的学习潜在空间隐式表示的记忆。

Explicit Storage: 将记忆存储在模型外部,以文本、向量或图等格式存在,使其更容易检索、更新和解释。

Raw Memory: 以文本、视觉和听觉格式存储信息,包括对话历史、压缩对话和其他表示形式,代表了最可解释的存储方法。

Vector Memory: 将信息存储为高维语义空间中的密集、连续值向量,通常由神经网络存储模型生成。

Graph Memory: 将信息编码为实体及其关系的显式网络,通常使用图数据库实现,非常适合表示复杂连接。

Modality Type: 根据其处理的信息格式对AI记忆进行分类,分为单模态和多模态记忆。

Single-modal Memory: 侧重于存储、更新和检索来自单一模态的信息,其中文本是最成熟和广泛采用的形式。

Multimodal Memory: 集成来自多种模态的信息,包括文本、图像、音频和视频,使智能体能够感知和推理复杂环境。

Single-Agent System: 指的是为单个智能体设计的人工智能记忆架构和功能机制。

Memory Architecture: 描述了AI记忆系统中的信息组织形式和设计差异。

Hierarchical Memory Architecture: 是一种为智能体系统设计的记忆架构,通过分层存储结构和动态管理来解决LLM上下文窗口容量有限与对长期信息存储和检索的无限需求之间的矛盾。

OS-like Memory Architectures: 这种架构借鉴操作系统设计,采用分层存储和动态管理机制来解决长期交互中记忆一致性和资源分配的挑战。

Cognitive Evolution Memory Architectures: 这种架构模拟人类认知过程或融合心智理论,使智能体能够开发可演化的记忆和策略系统以进行自我优化。

Graph and Temporal Memory Architectures: 通过图结构(例如,实体-关系图)或时间模型来组织信息,以捕获复杂关系,旨在增强智能体记忆。

Memory Storage Function: 作为AI智能体的核心模块,主要功能是将零碎的观测数据转化为结构化和持久的记忆记录,并建立标准化的索引架构以支持后续检索。

Memory Retrieval: 是指从大规模记忆库中精确检索和整合信息以指导生成过程,从而缓解幻觉并增强推理能力。

Memory Updating: 是修改、替换或整合现有存储内容的过程,确保智能体通过纠正错误或过时信息并在新数据到达时整合零碎知识来防止错误再次发生。

Self-Evolution: 指的是智能体在持续交互和任务执行过程中,动态迭代和优化所获得的知识、技能和行为策略的能力。

Association (AI Memory): 指的是将多模态信号(文本、视觉、音频、交互)整合到连贯的情境模型中以构建记忆的过程。

Multi-Agent Systems (MAS): 是指多个智能体通过记忆机制进行交互和协作以实现集体智能的系统。

Communication Mechanisms (MAS): 指的是多智能体系统中用于介导记忆共享的通信方式,包括显式和隐式两种主要模态。

Explicit Communication (MAS): 涉及智能体之间符号信息的刻意传输,范围从非结构化自然语言对话到高度结构化、正式化的协议。

Implicit Communication (MAS): 允许多智能体系统在没有直接、有意的智能体间消息传递的情况下进行协调,而是通过观察共享环境或内部状态的共享表示来推断他者的意图或状态。

Memory Sharing Mechanisms (MAS): 指的是多智能体系统中,用于促进集体智能共享记忆的机制,通常在任务级别和步骤级别上进行优化。

Task-Level Memory Sharing: 指的是整合不同任务执行的经验,以促进长期演化和跨领域知识转移的机制。

Step-Level Memory Sharing: 指的是在单个协作工作流的精细执行阶段,将特定信息动态分配给相关智能体的过程。

Evaluation Metrics (AI Memory): 衡量AI记忆系统性能的标准,包括记忆检索能力、动态更新能力、高级认知能力和系统效率。

Memory Retrieval Capability: 评估记忆系统准确、全面地定位与当前查询相关信息的能力。

Dynamic Updating Capability: 评估记忆系统正确维护其知识库新鲜度的能力,超越静态信息检索。

Advanced Cognitive Capability: 代表智能体超越简单的存储和检索,利用记忆进行高阶推理的能力。

System Efficiency (AI Memory): 评估AI记忆模块在实际部署中的工程可行性,涵盖延迟、令牌开销和存储效率。

Evaluation Benchmarks (AI Memory): 用于系统化组织记忆评估的测试集,根据其评估任务的核心特征进行分类。

摘要¶

该调查报告《Survey on AI Memory: Theories, Taxonomies, Evaluations, and Emerging Trends》全面审视了AI系统中的记忆机制,强调其在推动LLM驱动智能体实现动态适应、复杂推理和经验学习方面的核心作用。该报告旨在通过统一的理论框架,弥合计算机制与认知心理学中类人记忆过程之间的差距,并提出了一个结构化的“4W Memory Taxonomy”来系统化分析记忆系统。

2 理论基础 (Theoretical Foundations) 报告首先为记忆增强型智能体奠定了概念基础,融合了生物学原理与计算定义:

2.1 跨学科基础 (Interdisciplinary Foundations):

Atkinson–Shiffrin 三阶段模型 (Tri-Store Model):将人类记忆概念化为感觉记忆 (sensory memory)、短时记忆/工作记忆 (short-term/working memory) 和长时记忆 (long-term memory) 三个相互作用的存储阶段,通过控制过程(如注意力、复述、检索)协调。感觉记忆捕获瞬时高保真输入;短时记忆是有限容量的活跃工作空间;长时记忆是长期储存的事实、事件和技能的庞大知识库。

工作记忆模型 (Working Memory Model):Baddeley 和 Hitch 提出,将短时存储重构为多组件工作记忆系统。中央执行器 (central executive) 作为有限容量的控制器,指导注意力并协调资源。它由语音循环 (phonological loop)、视空间画板 (visuospatial sketchpad) 和情节缓冲器 (episodic buffer) 支持,后者整合信息以形成统一的多模态事件。

互补学习系统理论 (Complementary Learning Systems Theory, CLS):该理论将大脑的记忆架构概念化为海马体 (hippocampus) 和新皮层 (neocortex) 之间的协同伙伴关系。海马体快速编码和索引新经验片段,而新皮层则作为深度存储,通过渐进式更新保护现有知识。这种互补分工使得新事件能够快速捕获,并随后在睡眠等安静时期通过海马体重新激活,引导新皮层进行稳定、渐进的整合。

对AI记忆设计的启示 (Implications for AI Memory Design):

索引与内容分离模式 (Index and content separation pattern):通过维护紧凑的情节键和连接到长时存储中详细内容的检索器,克服上下文窗口限制,实现高效检索。

多阶段整合模式 (Multiphase consolidation pattern):在战略性时刻将近期事件痕迹转化为摘要、反思和可复用技能,使长时记忆结构化和通用化。

结构化协调模式 (Structured coordination pattern):将活跃工作空间组织成专业缓冲区,由中央控制器监督,实现口头、视觉和工具相关输出的并行维护和动态集成。

2.2 “记忆”在AI中的边界 (Boundaries of “Memory” in AI):

AI Memory vs. Agent Memory vs. LLM Memory:

LLM memory:主要指计算核心的低级机制,存在于预训练模型权重中的参数记忆和通过上下文窗口管理的运行时记忆,侧重于即时生成准确性。

Agent memory:在此基础上扩展为功能工作流,系统地支持自主行为(感知-规划-行动循环),将数据结构化为程序性、声明性和元认知格式,实现经验学习和策略细化。

AI memory:最广泛的定义,涵盖信息持久性和演化,是终身学习的总体认知概念,目标是持续适应和人类对齐。

Memory vs. Knowledge:Memory是动态存储,通过交互演化,包含参数权重和向量数据库等非参数外部存储;Knowledge是静态沉淀,是经过整理的模式、本体和通用概括。Memory关注“刚刚发生什么”,Knowledge关注“通常如何运作”。两者边界可渗透,记忆可通过整合转化为知识,知识指导记忆形成。

Memory vs. Context:Context主要代表LLM内的即时执行环境,是模型处理特定推理步骤所需的有界计算缓冲区;Memory是应用层面的状态管理器,存在于模型短暂执行周期之外,用于维护用户交互和智能体历史的更大范围。Context在处理过程中被清除或覆盖,而Memory在系统接口层面持续存在。

Memory vs. Experience:Memory是特定交互的基础记录,保存原始数据点,是“发生过什么”的静态存储库,缺乏固有的通用性;Experience是更高阶的认知构建,将原始痕迹合成为抽象的、可迁移的模式,使智能体能将过去上下文中学到的经验泛化到新任务。通过反思和整合机制,智能体将原始情景记录提炼为精炼的认知策略,并存储回记忆中。

3 AI记忆的分类 (Taxonomy of AI Memory) 报告提出了一个“4W Memory Taxonomy”框架,即When-What-HoW-Which,系统地对AI记忆系统进行分类:

3.1 按记忆生命周期分类 (Classification by Memory Lifecycle):考察记忆的时间跨度及其持久性。

瞬时记忆 (Transient Memory):极短寿命,仅存在于即时输入处理期间,作为感知输入的临时缓冲区(如Transformer中的KV Cache,Voyager处理的Minecraft像素输入)。高波动性。

会话记忆 (Session Memory):在单个任务执行或对话交互中持续存在,但在会话结束后不保留(如LLM的上下文窗口,MemoryOS中的会话级信息)。主动维护和操作信息。

持久记忆 (Persistent Memory):超越个体会话而存在,可跨多个交互、任务甚至智能体实例访问。存储在外部数据库、文件系统或模型参数中。分为参数性 (parametric) 和非参数性 (non-parametric)。

3.2 按记忆类型分类 (Classification by Memory Type):考察记忆捕获的知识性质及其功能角色。

程序性记忆 (Procedural Memory):封装可执行知识,包括技能、行动工作流和工具利用(如MemGPT中的多步骤规划序列,MemoryBank捕获的决策模式,Generative Agents的社交互动模式)。

声明性记忆 (Declarative Memory):存储事实性知识和感知观察(包括情景记忆和语义记忆)(如Generative Agents的记忆流,ReAct的观察结果,VideoAgent的视觉环境表示)。

元认知记忆 (Metacognitive Memory):关于智能体自身思维和能力的知识,使其能追踪性能、反思行动、调整策略(如Reflexion的反射性语言记忆,Memento的自我认知记忆)。

个性化记忆 (Personalized Memory):存储关于其他智能体和用户的信息,如偏好、行为和关系(如Mem0的用户偏好,MemoryBank的用户画像,MemoryOS的用户相关记忆系统)。

3.3 按记忆存储方式分类 (Classification by Memory Storage):考察记忆的物理形式、访问模式和计算特性。

隐式存储 (Implicit Storage):记忆存储在模型架构内部。

参数性记忆 (Parametric Memory):直接嵌入在模型参数和权重中,通过训练过程(预训练、微调、LoRA)获得(如Toolformer的工具使用模式,Baijia的角色扮演能力)。优点是无需显式检索的快速推理,但存在灾难性遗忘、更新成本高、可解释性有限等问题。

潜在记忆 (Latent Memory):通过模型学习到的潜在空间隐式表示(如MemoRAG的紧凑隐藏状态记忆,MemoryLLM的记忆token)。用于捕获复杂的、高维的知识表示。

显式存储 (Explicit Storage):记忆存储在模型外部,形式如文本、向量或图。

原始记忆 (Raw Memory):以文本、视觉或听觉格式存储信息(如对话历史、压缩对话)(如MemoryOS的文件文本存储,AMem的情景回忆)。可解释性最高,与LLM上下文窗口无缝集成。

向量记忆 (Vector Memory):将信息存储为高维语义空间中的稠密连续值向量,通过相似性检索(如FAISS)实现高效检索(如MemOS的向量化记忆,Mem0的向量编码记忆)。

图记忆 (Graph Memory):将信息编码为实体及其关系的显式网络,通常使用图数据库(如Neo4j)(如Zep的时间知识图谱,Mem0的图结构记忆,Cognee的知识图谱)。适用于复杂关系推理。

3.4 按模态类型分类 (Classification by Modality Type):考察记忆处理的信息格式。

单模态记忆 (Single-modal memory):处理单一模态的信息,文本是最成熟和广泛采用的形式。计算效率高,有效记忆跨度长(如MemoryOS的分层存储,Mem0的摘要和选择性更新,Zep的图基结构)。

多模态记忆 (Multimodal memory):整合来自多种模态的信息(文本、图像、音频、视频),通常由多模态基础模型支持。

原始模态表示 (Raw Modality Representation):将原始多模态数据编码为高维嵌入向量,以实现快速访问和特征复用(如Memory-QA的视觉记忆,Moviechat的嵌入式记忆,Optimus和JARVIS-1的嵌入式多模态记忆)。

苏格拉底表示范式 (Socratic Representation Paradigm):利用多模态到文本的抽象策略,将异构多模态输入转换为结构化文本描述(如Ego-LLaVA的视觉经验到文本,MIRIX和MM-VID的视觉流描述,M3-Agent的文本情景和语义记忆)。语言作为统一的跨模态中介,降低存储开销,提高可解释性。

4 单智能体系统中的AI记忆 (AI Memory in Single-Agent System) 本节系统概述了为单智能体系统设计的AI记忆架构和功能机制。

4.1 典型智能体记忆架构 (Typical Agent Memory Architecture):

分层记忆架构 (Hierarchical Memory Architecture):通过分层存储结构和动态管理解决LLM有限上下文窗口与长期信息存储需求之间的矛盾,模拟人类记忆的分层组织(如HMT的分层感官、短时、长时记忆,H-MEM的四级语义抽象)。

类操作系统记忆架构 (OS-like Memory Architectures):借鉴操作系统设计,采用分层存储和动态管理机制处理长期交互中的记忆一致性和资源分配(如MemGPT的页面调度技术,MemoryOS的“热度驱动”分段页面调度,MEMOS的MemCube单元统一管理异构知识)。

认知演化记忆架构 (Cognitive Evolution Memory Architectures):模拟人类认知过程或融入心智理论 (Theory of Mind),使智能体能够开发可演化的记忆和策略系统进行自我优化(如AUGUSTUS的“编码-存储-检索-行动”闭环,Nemori的“预测-校准”循环)。

图与时间记忆架构 (Graph and Temporal Memory Architectures):利用图结构(特别是知识图谱)来建模复杂关系依赖,编码时间动态以实现精确记忆生命周期管理,并提高推理准确性(如Zep的时间知识图谱,Mem0的图结构记忆,MemTree的树状分层结构)。

4.2 AI记忆的基本功能 (Basic Functions of AI Memory):

记忆存储 (Memory Storage):将分散的观察数据转换为结构化、持久的记忆记录,并建立标准化索引架构以支持后续检索。每个记忆单元配置时间戳、源标识符和结构化语义字段作为索引维度。存储内容分为程序性、声明性、元认知和个性化记忆。存储格式分为显式存储(可直接访问和解释)和隐式存储(编码在模型参数中)。

记忆检索 (Memory Retrieval):从大规模记忆存储库中精确检索和整合信息,指导生成过程,减少幻觉并增强推理能力。主要分为:

向量化检索 (Vector-based retrieval):将离散记忆内容映射到嵌入向量空间,通过语义相似性计算进行检索(如RAG架构)。

分层检索 (Hierarchical retrieval):将记忆结构化为语义抽象层级,先定位宏观意图再深入细节。

图基检索 (Graph-based retrieval):将记忆元素表示为相互连接的节点和边缘,模拟人类联想记忆机制(如Zep的Graphiti引擎,Mem0)。

多模态检索 (Multimodal retrieval):将视觉信息与语义标签集成,扩展检索任务范围(如HippoMM对视听流的结构化,MovieChat的密集观察到稀疏记录的凝练)。

记忆更新 (Memory Updating):修订、替换或整合现有存储内容,纠正错误或过时信息,并在新数据到达时整合碎片化知识。分为:

增量更新 (Incremental updates):连续将新感知经验和信息注入记忆库而不干扰现有知识(如Zep的非损耗性增量合成,MemoryLLM/M+的潜空间扩张)。

纠正性更新 (Corrective updates):旨在纠正模型内过时或错误知识(如H-MEM的动态权重调节,WISE的双参数记忆方案)。

整合更新 (Consolidation updates):通过语义抽象和碎片化记忆的摘要优化存储结构,提高检索效率(如MemoryOS的“热度驱动”整合,LightMem的认知启发式睡眠时间整合,MemoryField的引力场融合)。

遗忘更新 (Forgetting updates):通过算法主动删除或抑制冗余、敏感或低价值信息(如MEOW的“倒置事实”标签)。

4.3 AI记忆的高级功能 (Advanced Functions of AI Memory):

自我演化 (Self-Evolution):智能体通过持续交互和任务执行,动态迭代和优化所获取的知识、技能和行为策略的能力。将AI经验提炼为可演化的结构(如适应性目标、可调节约束、更新的因果关系、迭代行动模式),从而减少增量学习成本,提高对噪声和新颖性的鲁棒性(如LightSearcher的推理和工具调用轨迹蒸馏,Voyager的扩展可执行技能库)。

关联 (Association):将多模态信号(文本、视觉、音频、交互)整合到连贯的情境模型中以构建记忆。通过融合(实体、时间戳、位置)、跨注意力机制和图式链接,减少歧义,提高引用解析,保持记忆跨帧/对话的连续性(如M3-Agent的动作/事实记忆图谱,Mem-0g的实体/关系保留)。

4.4 单智能体记忆范式的局限性 (Limitations of Single-Agent Memory Paradigms):

记忆错位 (Memory Misalignment):智能体对全局状态的感知分歧,导致输出基于过时或碎片化的私人记忆,损害系统连贯性。

冗余循环 (The Redundancy Cycle):缺乏统一的进度记录,导致智能体重复劳动,浪费计算资源和存储空间。

集体智能停滞 (Stagnation of Collective Intelligence):记忆隔离阻碍共享知识的积累,导致宝贵见解(如API解决方案)被孤立,后续智能体无法利用基础知识库,阻碍集体智能演化。

5 多智能体系统中的记忆机制 (Memory Mechanisms in Multi-Agent Systems, MAS) 本节探讨MAS中集体智能的基础架构,旨在弥合瞬时交互与持久知识之间的鸿沟,解决孤立记忆模型的局限性。

5.1 MAS中的通信机制 (Communication Mechanisms in MAS):MAS中有效协作依赖于通过记忆共享实现的通信。主要有两种模态:显式符号交换和隐式状态协调。

显式通信 (Explicit Communication):智能体之间符号信息的刻意传输。

非结构化自然语言 (Unstructured Natural Language):智能体通过自然语言交互协调,由明确的角色提示指导(如ChatDEV)。灵活性高,但存在歧义、冗余、token消耗高的问题。

结构化数据模式 (Structured Data Schemas):限制智能体间信息交换为预定义、机器可解释的格式(如MetaGPT强制使用UML图表)。高保真,可靠,将结构化数据作为记忆片段传输。

动态分配 (Dynamic Allocation):信息不再依赖静态一对一交互,而是根据任务需求从共享记忆空间动态路由到相关智能体(如RCR-Router)。将信息生产与消费解耦,实现更灵活和可扩展的智能体协作。

隐式通信 (Implicit Communication):在没有直接智能体间信息传递的情况下,通过个体智能体程序内部处理实现协调。

潜在表示 (Latent Representation):智能体直接共享其内部的连续潜在表示(隐藏嵌入),而非离散的自然语言token(如LatentMAS,”Dense Communication”和”Thought-to-Thought”交互)。旨在实现高表达能力。

压缩知识 (Compressed Knowledge):将压缩机制应用于模型的最终隐藏状态,以优化推理效率同时保留语义保真度(如Interlat框架)。通过高效压缩技术直接传输潜在状态,使智能体能更好地利用微妙的内部信息。

5.2 MAS中的记忆共享机制 (Memory Sharing Mechanism in MAS):共享记忆是集体智能的基础,研究在两个粒度优化:任务级和步骤级。

任务级记忆共享 (Task-Level Memory Sharing):从不同任务执行中整合经验,促进长期演化和跨领域迁移。

同质经验积累 (Homogeneous Experience Accumulation):智能体团队在特定任务执行中积累经验,将原始历史数据转化为可演化的智慧或经验,通过记忆抽象提炼高层见解、程序技能和抽象策略(如G-Memory,SEDM的推理轨迹蒸馏,MemoryOS++1的群体经验搜索)。

异质信息传输 (Heterogeneous Information Transfer):促进执行异构任务的不同智能体之间的信息交换,建立共享知识池,使智能体能检索和复制同伴已验证的解决方案路径(如MS的横向知识转移)。

步骤级记忆共享 (Step-Level Memory Sharing):在单个协作工作流的粒度执行阶段,动态分配特定信息给相关智能体。旨在解决多智能体协作中的“噪声-上下文权衡”问题,通过上下文路由实现,而非广播全局状态。系统分析每个智能体的功能角色和任务的当前阶段,仅传递关键信息片段(如RCR-Router)。

6 AI记忆的评估 (Evaluation on AI Memory) 报告提出了评估LLM驱动智能体记忆的综合分类法,包括四个核心维度。

6.1 记忆机制的评估指标 (Evaluation Metrics for Memory Mechanisms):

记忆检索能力 (Memory Retrieval Capability):

检索性能 (Retrieval Performance):直接评估记忆模块的质量,关注覆盖率、精确度和排名质量(指标:Recall@\(k\),Precision@\(k\),NDCG@\(k\))。

响应正确性 (Response Correctness):通过下游任务的成功率间接评估检索质量,答案可直接从原文中找到(指标:Accuracy,F1-Score,BLEU-N,ROUGE-L)。

动态更新能力 (Dynamic Updating Capability):

记忆修改 (Memory Modification):评估系统在新冲突信息出现时正确修改现有记录的能力(指标:Update Accuracy,Hallucination Rate,Omission Rate)。

记忆写入 (Memory Writing):评估将原始交互文本转换为存储记忆的忠实度和完整性(指标:Memory Recall,Memory Accuracy,F1-score)。

记忆遗忘 (Memory Forgetting):指算法上选择性地删除特定数据对模型参数记忆的影响,同时保留不相关知识的完整性(指标:Truth Ratio,ROUGE-L Recall)。

高级认知能力 (Advanced Cognitive Capability):

泛化能力 (Generalization):LLM有效将所获知识或技能迁移和应用于未见任务的能力(指标:Success Rate)。

时间感知 (Temporal Perception):智能体在交互中维护和更新事件和实体状态连贯时间线的能力(指标:Kendall’s \(\tau\),Accuracy)。

个性化 (Personalization):智能体利用长时记忆根据用户历史、身份和行为模式提供定制服务的能力(指标:Accuracy,Human or LLM-based Scoring)。

系统效率 (System Efficiency):评估实际部署的工程可行性,对可扩展性和用户体验至关重要。

延迟 (Latency):特定操作(如检索或写入)的精确时间成本(指标:Percentile Latency)。

token开销 (Token Overhead):将检索到的记忆打包到提示中单轮交互所消耗的token总数(指标:Tokens Consumed)。

存储效率 (Storage Efficiency):记忆模块在有限约束下尽可能减少物理存储占用,同时保留所有关键信息的能力(指标:Storage Cost)。

6.2 评估基准 (Evaluation Benchmarks):

静态记忆评估 (Static Memory Evaluation):强调从固定、非更新输入中检索记忆(例如:LoCoMo、EpisodicGen、LongBench、RULER、HotpotQA)。

动态记忆评估 (Dynamic Memory Evaluation):评估智能体管理记忆更新和适应演变上下文的核心能力(例如:MemoryAgentBench、MemoryBench、HaluMem、DialSim、BEAM)。

个性化记忆评估 (Personalization Memory Evaluation):评估记忆合成和维护演变用户档案及个性化偏好的关键能力(例如:MemBench、MemSim、PERSONAMEM、LongMemEval、PerLTQA、PREFEVAL)。

环境记忆评估 (Environment Memory Evaluation):评估记忆在复杂外部环境中支持顺序行动的实际效果(例如:WebChoreArena、MT-Mind2Web、StoryBench)。

多模态记忆评估 (Multimodal Memory Evaluation):测试记忆对异构非文本模态的时空信息进行对齐和检索的能力(例如:Video-MME、MLVU、LVBench、M3-Bench、EgoSchema、EgoLifeQA、Memory-QA、MMNeedle)。

6.3 评估挑战 (Evaluation Challenges):

数据集构建 (Dataset Construction):缺乏统一、高质量的数据集。

性能归因的模糊性 (Ambiguity in Performance Attribution):难以隔离记忆的贡献。

评估指标的困境 (Dilemma of Evaluation Metrics):没有单一指标能捕捉其全部复杂性。

该报告通过综合认知理论与工程基准,为AI记忆的理论理解和技术发展提供了一条连贯的路线图。